New Curriculum! Comparing Children’s Lives and Community Against AI’s Illusions of Life

Civics of Technology Announcements

Upcoming Book Club: We’re reading The Digital Delusion: How Classroom Technology Harms our Kids’ Learning - And How to Help Them Thrive Again by Jared Cooney Horvath. Join us on Wednesday, April 22nd at 7:00 PM EST. You can register here. You can read more about our mixed feelings and reasons we’re reading this book in this blog post.

AERA Meetup: Meet up with us at AERA! Friday, April 10, 2026 4:00-5:30 PT at the Yard House in LA. Please RSVP for the event here.

By Marie K. Heath

So as not to bury the lede– we have a new activity on our curriculum page! The lesson, Comparing Human and AI Soundscapes, on sound journals, community, and placemaking invites students to compare the way they describe their community with the way AI describes their community. It is inspired by Stephanie Smith Budhai’s and my book, Critical AI in K-12 Classrooms: A Practical Guide for Teaching Justice and Joy. In today’s blog, I give the larger context for the lesson, share examples and responses to the lesson, and offer adaptations to the lesson suggested by NYC school teachers.

Tech Company Visions of Humans Users

In our blog, through our curriculum, and at our conferences, tech talks, and book clubs, the Civics of Tech project has wrestled with how to teach about the power of AI companies. We’ve examined their tools of empire employed to amass vast political, cultural, environmental, and economic resources (Crawford, 2021; Hao, 2025). We’ve critiqued the ways Google, Meta, OpenAI and others mine user data, invade our privacy and surveil our movements, texts, and locations, prioritizing profits over people (Zuboff, 2019). We’ve discussed how they extract rare metals from the depths of the planet, poisoning land often situated amongst communities of already marginalized people (Crawford, 2021). In the midst of a global climate crisis they suck communities dry of water in order to cool the massive data centers used to store and process the enormous quantities of stolen data (Tan, 2025). We have developed tech audits to help uncover the ways they reproduce bias and cause disproportionate and material harm to vulnerable populations (Buolamwini, 2024)

The obvious environmental and social harms caused by AI companies and their disregard for moral practices can sometimes distract my own attention away from another deeply encoded problem of belief. Tech companies and tech venture capitalists have an impoverished view of what it means to be human.

They conceive of humans as advanced computing machines striving for efficiency and productivity. Humans become “users” which can be maximized for output, if only the programming is powerful enough. Tech companies have claimed that everything from art to relationships to human labor can be transmuted into numbers, crunched by a machine, and then converted back into what they tell us is generative, a new “creation.” From the tech companies’ perspectives, this simulated output should awe us just as much as human created and sustained efforts. This is because, to them, humanity is an object, an algorithmic puzzle to be solved–rather than a subject, one with agency and mystery impossible to fully plumb.

They ignore the necessity of life, of being alive, to art and the human experience. It’s how, with a straight face, they can invite us all to become “co-creators” and “co-intelligent” with their machines. They claim that, human and machine together can authentically craft poems, conjure images, and compose music in a matter of seconds and with no prior experience or context. They evangelize this technology to schools, marketing AI as an innovative tool of empowerment for children, one which can help young people to dream of new futures when they think alongside this powerful machine (Microsoft, 2025).

Their vision reduces humanity to a functional computational model in the classroom, the workplace, and in our social lives. Yet it this ethos is insidious and difficult to surface because it is encoded across the entire design, function, and hype marketing of the machine.

Vibrant Visions of Humans and Community

In our book, Critical AI in K-12 Classrooms: A Practical Guide for Teaching Justice and Joy, Stephanie Smith Budhai and I contrast technology companies’ mechanistic metaphors for humanity with the vibrant visions of Black Feminists. bell hooks, Sojourner Truth, Katherine McKittrick and others demand we attend to the role of bodies, of ancestors, of collective memory and wisdom, of the humans who exist and existed in all of their complexity, and are connected to each other across time and space. Stephanie and I further draw comparisons between the imposed boundaries of colonialism and empire on colonized people, and the imposed boundaries of AI, a technology built on practices of colonialism and empire, on our collective imaginations. In Chapter Four we wrote,

From the slave ship, to the plantation, to prisons and ghettos, Black people have always created homeplaces of freedom inside of sites designed by whiteness in order to convey captivity. McKittrick (2006) argues that the Black geography—the way Blackness rewrites place as alive with community, collective memory, and shaped by blood and bones — offers a powerful counter to the master narrative of geography as ordered and fixed borders of lines and rivers and zip codes and nations: the containment of people and land. In practice, this radical space making has looked like the Maroon communities living on the margins of slavery or the Black Panther’s community organized social services inside of segregated and ghettoized Black communities. In each of these spaces, McKittrick contends that Black people have long contested the dominant narratives of citizenship, belonging, and subjugation of marginalized peoples.

We think it is imperative that students and teachers consider the way genAI embeds and reproduces the cartographies of whiteness. We wrote earlier…about the ways that AI operates behind a screen of perceived objectivity while quietly reproducing racism. Here, we invite…teachers to guide students in an investigation of their own communities and sites of homeplace, first in the analogue and then through the digital use of generative AI, in order to compare and ask questions about how genAI might shape understanding of community. Depending on the age of students and the content [area], teachers can draw parallels between historic homeplaces and current homeplaces, and then investigate homeplace in their own lives and community.

Celebrating Being Fully Human in Community

I recently expanded that suggestion from the book to develop a full lesson, Comparing Human and AI Soundscapes. This lesson leans on the spirit of our work to extend the idea that richness of community can never be fully contained within the algorithms of a machine. The aim of this lesson is to contrast the aliveness, community, and creativity necessary to creating homeplaces against the predictive algorithms and encoded oppression of AI generated descriptions of community. In strengthening students’ relationship to and appreciation of their own community, this lesson aims to help uncover the meager conception of the human experience at the core of genAI.

There are four parts to the lesson, Part I: Sound Journal Activity asks students what do we notice about our day when we listen to it? Our lesson includes an adaptable handout and examples of how students might engage in creating a sound journal. Part II: Class Created Collage of Community Soundscapes invites students to consider what do we notice about our community when we listen to it? The lesson includes samples of how to create community soundscape collages on paper, with drawings, using wordclouds, through podcasts, or with digital maps. Part III: Comparing the Collective Soundscape to an AI Generated Soundscape models how to lead students in analysis and discussion of the two different versions of community, the students’ and the AI’s. The lesson includes an optional Extension and Connections: Soundscapes Around Town which offers opportunities to connect with other communities and schools doing this same work.

Teacher Feedback

Yesterday, Dan Krutka and I partnered with New York City school teachers to try out this lesson. We opened by taking five minutes to write the sounds we heard in the room we were in. Then we moved outside or to another room and repeated the journaling activity. Teachers described the activity as

mindful

relaxing

a poem of sounds they wrote on the page because of the overlapping immediacy of being a present listener

a challenge to capture the sounds accurately because they wanted to take a scientific approach,

a desire to use onomatopoeia and simply write vrrOOOOOOoooommm as a vehicle passed by,

an amazement that we all heard birds, no matter where we were

a celebration of our sleeping pets and their comforting breathing

a delight at the indescribable yet specified sound of tires on wet pavement

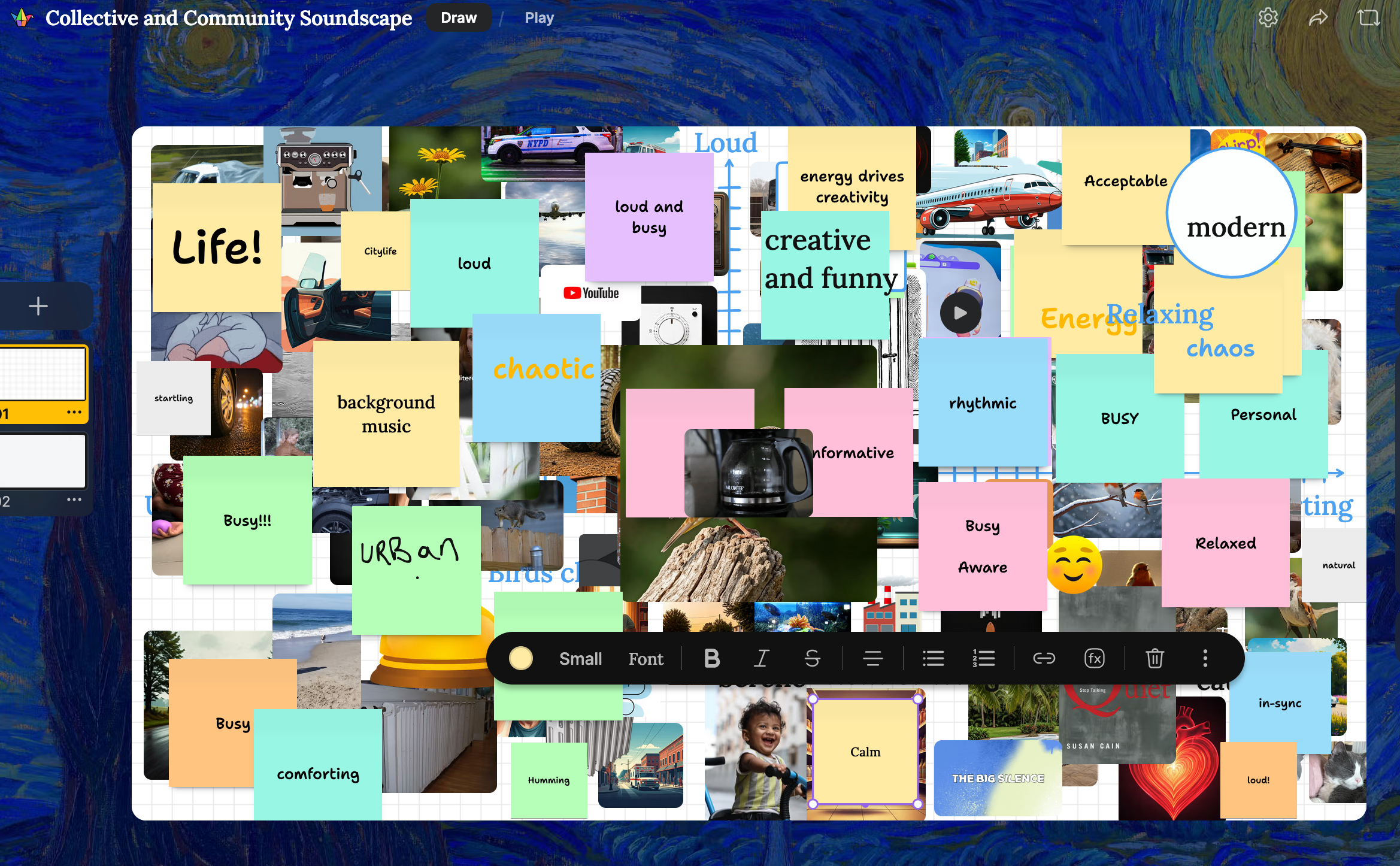

The teachers described the soundscapes of our community as: busy, funny, chaotic, relaxed, humming, loud, comforting, urban, and (my personal favorite) LIFE!

Image of NYC Teachers’ Collective and Community Soundscape

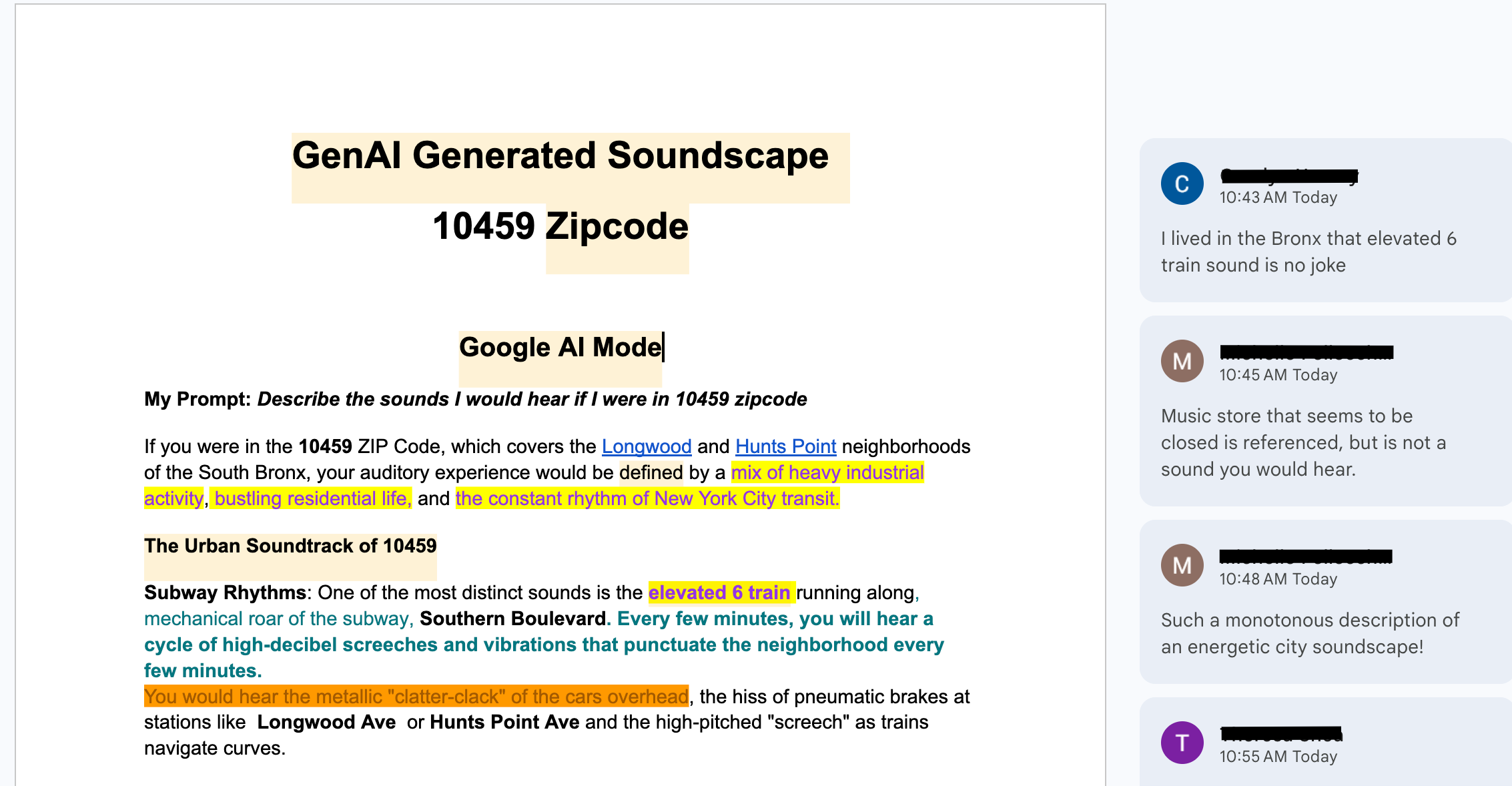

Next, we compared our soundscapes to the AI generated descriptions of the 10459 zipcode, which includes neighborhoods in the South Bronx. The teachers commented on the AI generated text with what they noticed and wondered. Ultimately, we concluded that the AI descriptions lacked the beauty and chaos of our own, real, world. The AI was mostly accurate, but generic.

Teacher edits and comments of GenAI Generated Soundscape for the Longwood and Hunts Point neighborhoods of the South Bronx.

The AI also divorced sounds from their larger context, often spitting them out in a bulleted list. One participant noted that some of the sounds it listed are tied to issues of pollution and broader contexts of environmental justice. It also generated problematic stereotypes about the community, describing the music of hip hop as “noise” and listing all of the loud and unpleasant sounds first, before moving on to sounds of life. We noticed that while all of us identified bird sounds in our soundscapes, AI said it was only a sound we would hear if we were near a park, not in a neighborhood.

This comparison generated thoughtful questions including “how does it know all this?” and “what would it say if I put a different zipcode in?” This allowed us to engage in conversation about how AI works, that it is a predictive machine, and that it reproduces assumptions of bias at obvious and less obvious levels.

Teacher Ideas for Adaptations

Dan and I closed the activity by giving the teachers time to adapt this lesson to their contexts, including by grade and subject. Here are some of the thoughtful adaptations teachers suggested.

Do the soundscape walk in conjunction with a nature walk (which your school might already include as part of science curriculum)

Create soundscape “buddies” and partner preK/K with 4th/5th grade to do a nature and soundscape walk, help interpret ideas, and get them onto sticky notes

Incorporate this activity into a community workshop or a family night, and do it together with caregivers

Make the activity cross disciplinary and integrated with the arts. In art, make the collage. In science, do the nature walk. In STEM/CS connect and compare the AI.

A modification for math: create a tally sheet for types of sounds (loud, quiet, medium OR nature, vehicles, human, etc.). Analyze the data by totaling the tallies and making that number the divisor. Use ratios, fractions, or percentage to help describe the sounds and data.

Chunk the journal activity. On Monday, listen to the sounds of your morning for 5 minutes. On Tuesday, listen to the sounds on your way to school. On Wednesday, listen to the morning sounds at school, etc.

Conclusion

The activity can be scaled up and down in scope, but the aim remains the same: to center the aliveness of community, the complexity of relationships, and the layers of life that make up the places we live. And then to compare that with a possibly impressive, but ultimately, hollow, description of community generated by AI. At the close, students should understand that AI can generate specific, detailed, responses (that can also be problematically biased!) but it can never recreate community, because community requires humans in relationship to themselves and their world. It requires life.

We hope you will try out this newest curriculum activity and give us feedback on how it goes!

References

Budhai, S. S., & Heath, M. K. (2025). Critical AI in K-12 Classrooms: A Practical Guide for Cultivating Justice and Joy. Harvard Education Press.

Buolamwini, J. (2024). Unmasking AI: My mission to protect what is human in a world of machines. Random House.

Crawford, K. (2021). Atlas of AI. Yale University Press.

Hao, K. (2025). Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI. Penguin Press.

Microsoft. (2025). AI in Education: A Microsoft Special Report. https://cdn-dynmedia-1.microsoft.com/is/content/microsoftcorp/microsoft/bade/documents/products-and-services/en-us/education/2025-Microsoft-AI-in-Education-Report.pdf

Tan, E. (2025, July 14). Meta built a data center next door. The neighbors’ water taps went dry. The New York Times. https://www.nytimes.com/2025/07/14/technology/meta-data-center-water.html